As a website owner or SEO specialist, you may have encountered the frustrating issue of seeing "Crawled - Currently Not Indexed" on Google Search Console. This issue can be quite perplexing and disheartening, as it means that Google has successfully crawled your website but has chosen not to index it for some reason.

It is important to note that there could be several reasons why Google has made this decision, ranging from technical issues on your website to content-related concerns.

It is crucial to investigate and address these issues promptly to ensure that your website receives the visibility it deserves in search engine rankings.

Remember, optimizing your website for indexing is an ongoing process that requires continuous monitoring and improvements. By staying vigilant and proactive, you can increase the chances of having your website indexed and ultimately improve its online presence.

Let's review some of the reasons why your site may be facing this issue and and provide actionable tips on how to fix it.

What does "Crawled - Currently Not Indexed" mean on Google Search Console?

By now, you're probably familiar with Google Search Console, if not, we recommend you get familiar with this tool as it provides valuable insights into how your site is performing in search results and can help you identify any issues that might be preventing your pages from ranking well.

One of the most common issues that webmasters encounter on Google Search Console is the message stating that your site was crawled but it's not currently indexed.. But what does this mean exactly, and why is it important to fix?

Before we dive into the specifics of fixing the "Crawled - Currently Not Indexed" issue, let's first discuss why it's so important to have your website indexed by Google in the first place.

Why is it important to have your website indexed by Google?

Simply put, if your website isn't indexed by Google, then it won't show up in Google's search results. And if people can't find your site through Google's search engine, then they're probably not going to find your website doing a search on Google. Tangent: Of course, there are other engines such as Bing, DuckDuckGo, You, Yep and dozens of more. However, since Google is popular, we'll talk about this issue as it relates to Google's search.

Having your website indexed by Google is crucial for several reasons. It significantly enhances your site's visibility, making it discoverable to users who are searching for information or services related to your business. This increased visibility directly translates to higher organic traffic, as your site can appear in search engine results for relevant queries.

A well-indexed site is also more likely to attract a targeted audience. People who are actively searching for products or content that you offer are more likely to find you, which can lead to improved conversion rates, as visitors are already interested in what you have to provide.

Furthermore, indexing is essential for keeping your site accessible in the ever-changing digital landscape. As Google updates its algorithms, it reassesses indexed content, helping to ensure that your website remains compatible with current search standards and practices.

In the competitive digital marketplace, being indexed by Google can also lead to indirect benefits such as increased brand recognition and credibility. When users frequently see your site in their search results, it can build trust and authority in your niche.

Lastly, proper indexing by Google is a cornerstone of search engine optimization (SEO). It allows you to utilize SEO strategies effectively, optimizing your content with keywords, meta tags, and other tactics to rank higher in search results, further amplifying your online presence and potential for business growth.

Reasons why your website might not be getting indexed by Google:

Now that we understand why having our websites indexed by google are essential let us now look at some reasons why our sites may not get crawled or currently not being indexed:

Technical issues with your website

Technical problems, such as server errors or broken links, can have a significant impact on the accessibility and indexing of certain pages by web crawlers. When a server error occurs, it means that the server hosting the website is unable to respond to the request made by the crawler. This can result in the crawler being unable to access the requested page, leading to potential indexing issues.

Similarly, broken links can also prevent crawlers from accessing specific parts of a website. A broken link occurs when a hyperlink on a webpage points to a non-existent or inaccessible resource. When a crawler encounters a broken link, it is unable to follow the path to the intended page, resulting in potential indexing problems for that particular page.

In both cases, these technical problems can prevent web crawlers from properly indexing the affected pages. As a consequence, these pages may not appear in search engine results or receive the necessary visibility to attract organic traffic. Website owners and administrators should regularly monitor for server errors and broken links to ensure optimal accessibility and indexing of their web pages.

Low-quality content

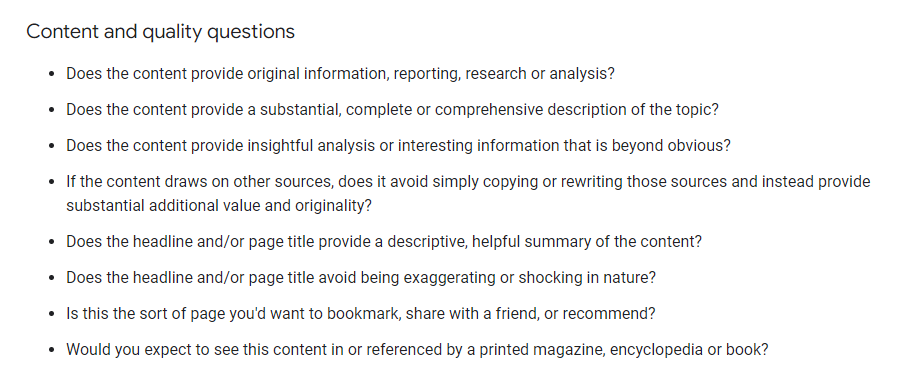

Google aims to provide users with the best possible search results, so they're unlikely to index pages that contain low-quality content. This could include thin or poorly written content, duplicate content, or pages that are stuffed with irrelevant keywords.

Duplicate content

Duplicate content can also cause issues when it comes to indexing as Google may not know which version of a page to rank in search results.

To make sure your website's content is unique and valuable, follow these strategies:

Create original content: Focus on producing high-quality, unique content that benefits your readers.

Do keyword research: Find relevant search terms to include strategically in your content for better visibility in search results.

Add a personal touch: Share personal insights and experiences to make your content more engaging.

Update and refresh content: Keep your content up-to-date by revisiting and improving existing articles or blog posts.

Cite and reference properly: Give credit to external sources to add credibility and avoid plagiarism issues.

Use multimedia elements: Enhance the user experience with images, infographics, videos, or audio clips.

By following these strategies, you can create standout content that attracts organic traffic and provides value to your audience. Remember, originality and uniqueness are crucial for online success.

Issues with your sitemap

If your website's sitemap is missing or contains errors, it can have a significant impact on the visibility and accessibility of your site. Without a proper sitemap, search engine crawlers may struggle to navigate and discover all of your site's pages, resulting in them not being indexed.

This means that important pages and content on your site may not appear in search engine results, making it difficult for users to find and access them. Errors in your sitemap can further complicate the crawling process, potentially causing certain pages to be overlooked or ignored altogether. To ensure optimal visibility and indexing of your site, it is crucial to have a well-structured and error-free sitemap that accurately reflects the layout and organization of your website.

Robots.txt file blocking Google from crawling your site

The robots.txt file is an essential tool that provides instructions to web crawlers regarding which parts of a website they are permitted to explore and which ones they should avoid.

This file is crucial in preventing search engines like Google from unintentionally accessing sensitive or restricted content, thereby preventing potential issues. It is vital to accurately configure the robots.txt file to ensure that search engine crawlers can effectively navigate a website without any obstacles or missing out on important sections.

Neglecting this task may result in certain areas of the site being excluded from search engine indexes, which can negatively impact its visibility and accessibility to online users. As such, website owners and administrators must give careful attention to correctly configuring this file in order to maximize the site's visibility and facilitate a seamless crawling process for search engines.

Now let us look at how we can fix these problems:

How to fix the "Crawled - Currently Not Indexed" issue:

Check for technical issues and fix them

In order to try to resolve the indexing issue, it is important to implement a series of technical measures. These steps include thoroughly analyzing the website's crawlability and indexability, ensuring that all pages are accessible to search engine crawlers, and optimizing meta tags and XML sitemaps. Additionally, resolving any potential server or URL redirection issues, improving website speed and performance, and regularly monitoring the site's index status are crucial steps in addressing this particular issue effectively.

Follow these technical steps:

1. Review Google's Indexing Guidelines

Ensure your page complies with Google’s guidelines for being indexed. Review the Webmaster Guidelines for any practices that might prevent indexing.

2. Check for Noindex Directives

Inspect your page for any unintentional noindex directives in the meta tags or HTTP headers. Remove them if found.

<!-- Look for a line like this in your HTML and remove it -->

<meta name="robots" content="noindex">

3. Assess Canonical Tags

Ensure the canonical tag on the page points to the correct URL you wish to be indexed.

<!-- Ensure this points to the correct URL -->

<link rel="canonical" href="https://www.example.com/page-url">

4. Look for Crawl Blocks in robots.txt

Make sure your robots.txt file isn’t blocking the page from being crawled.

# Check for disallow lines that might include your URL

User-agent: *

Disallow: /blocked-page.html

5. Check Internal and External Links

A page is more likely to be indexed if it's well-linked. Improve internal linking and seek quality external backlinks.

6. Improve Content Quality

Ensure the content is unique, substantial, and provides value. Thin or duplicate content is less likely to be indexed.

7. Submit the URL to Google

Use the URL Inspection Tool in Google Search Console to request indexing.

8. Use Fetch as Google and Submit to Index

In Google Search Console, use the "Fetch as Google" feature for your URL and then click on "Request Indexing".

9. Avoid Orphan Pages

Make sure that the page is accessible through at least one internal link.

10. Address Crawl Errors

In Search Console, check for crawl errors that might affect indexing and fix them.

11. Check for Manual Actions

In Google Search Console, check for any manual actions issued against your site and resolve them.

12. Analyze Page Speed and Mobile Usability

Use tools like Google's PageSpeed Insights and Mobile-Friendly Test to ensure good performance and mobile usability, which can affect indexing.

13. Inspect for Structured Data Errors

If you're using structured data, validate it using Google's Structured Data Testing Tool and correct any issues.

14. Monitor Indexing Status Over Time

Sometimes pages take time to be indexed. Monitor the status over a few weeks before taking further action.

15. Consult Google's Forum or SEO Professionals

If you're unable to resolve the issue, consider seeking advice from Google's Webmaster Help Forum or consult with SEO professionals.

Each case may vary, so if these steps do not resolve the issue, further investigation into specific situations may be necessary.

Improve the quality of your content

Make sure that you have high-quality unique and engaging contents on every page on your website. Avoid duplicating contents from other websites even if you have permission; instead ensure that it’s rewritten uniquely before publishing it on our platform.

Remove duplicate content

As mentioned earlier duplicated contents can make google confused about which version of a page should appear in their search result hence removing duplicated contents would help solve this problem

Use Google Search Console to request indexing of specific pages

If you've fixed all of the technical issues on your website, improved the quality of its content, removed duplicates contents, updated sitemap and checked robots.txt files but still having an issue; then consider requesting indexing for individual pages through the URL Inspection tool in Google Search Console.

If you have already addressed technical issues, enhanced content quality, eliminated duplicate content, updated your sitemap, and reviewed your robots.txt files but are still encountering difficulties, you may want to consider using the URL Inspection tool in Google Search Console to request indexing for specific pages.

Doing so can potentially resolve the "Crawled - Currently Not Indexed" error message. It's important to have a comprehensive understanding of the factors contributing to this issue and the appropriate methods for resolving it.

Additionally, it can be helpful to review your website's internal linking structure. Ensure that all important pages are interlinked properly, allowing search engine crawlers to easily navigate and index your site. Internal linking not only aids in better indexing but also improves the overall user experience by providing convenient navigation paths for visitors.

Another strategy you can employ is to optimize your website's loading speed. Slow-loading pages can negatively impact indexing as search engine bots may abandon crawling before fully accessing all your content. Make sure to optimize images, minimize unnecessary code, leverage browser caching, and utilize a reliable hosting provider to ensure swift page load times.

Also, consider implementing structured data markup on your website. Structured data helps search engines understand the context and content of your pages better. By adding structured data markup, you can provide additional information about your website's content, such as product details, reviews, event information, and more. This can enhance search engine visibility and increase the chances of your pages being indexed correctly.

Lastly, regularly monitoring your website's performance through Google Search Console can provide valuable insights into indexing issues. Keep an eye on the Index Coverage report to identify any specific errors or warnings related to indexing. By promptly addressing these issues and following the recommended actions provided by Google, you can improve your site's overall indexing status.

Remember, resolving the "Crawled - Currently Not Indexed" error message requires a systematic approach and constant attention to various factors. By implementing these strategies and staying proactive in maintaining a healthy website, you can increase the likelihood of your pages being indexed properly and achieving better visibility in search engine results.

By following these steps outlined above, you should be able to get back on track with getting your website indexed by google once again!

Join the discussion

0 Comment(s)